The Private Repo: Is it Just a Myth Now?

Why Your "Private" Code May Not Be as Private as You Think

You’re working on a private repository. Maybe it’s a hackathon project. Maybe it’s code for a stealth startup. Maybe it’s just something you’re not ready to show anyone yet.

You install GitHub Copilot, Codex, Cursor, Claude. You turn on “privacy mode” and even go into settings and disable “training on my data”.

So, your code is secret…right?

Not entirely, the digital walls of your private repository may be more permeable than they seem.

The Illusion of Control

GitHub recently mailed all its users regarding a change in its data usage policy to collect interaction data from all users – “including inputs, outputs, code snippets, and associated context” to train its models (source). The most jarring part is that this is enabled by default unless you explicitly opt out.

On its face, that doesn’t sound so bad. If you support AI, why wouldn’t you want to contribute to improving it?

But that’s not really the problem.

The problem isn’t contribution. It’s consent.

Because voluntary participation in the construction of better AI is radically different from being signed up to do so without clear awareness, and the “data” that might feed into such a system could include proprietary code, sensitive logic or work that was never intended to leave your own box.

Privacy Settings …or a Maze?

Cursor has privacy mode.

Claude has opt-outs.

Somewhere in your account, Copilot has settings.

Codex has 3 separate settings in 3 separate toggles across 3 separate menus that don’t talk to each other(source source2).

(Almost like it was designed to be confusing.)

But are these the solution to protecting our data?

There’s more nuance to this question’s answer than a simple yes or no. To understand how safe our data really is let’s follow the journey of your code.

Where does your data go?

One of the most overlooked aspects of modern AI tooling is the slick “pre-read”. Before you even begin a session, your AI assistant is already taking a first pass at your code. It isn’t just passively waiting. It’s actively processing your project in the background. Most modern AI coding tools operate as Retrieval-Augmented Generation (RAG) systems(rag-pipeline), meaning they don’t ‘know’ your code, but dynamically fetch relevant parts of it from a database every time you ask a question.

The second you open your project in a tool like Cursor, a background process starts monitoring your entire directory. It doesn’t even wait for a prompt before it’s already reading your file tree, beginning to index and chunk your codebase into tiny bits of data. Tools often use syntax-aware parsers to break code into meaningful chunks like functions, classes, and logical blocks before embedding them. These chunks, which on their own have no meaning then get sent to an embedding model to convert the text of their codebase into vectors (source) which are numerical representations of data that along with metadata get stored in a vector database.

The plaintext of your code is not permanently stored (in privacy mode) but the embeddings and metadata are. The vector database essentially forms a map of your private repository on someone else’s servers (rag-systems).

You Didn’t Just Send a Prompt

When you type a prompt into this LLM now, the prompt too gets converted into a vector using the same embedding model so that it can be mathematically compared to the other vectors already in the vector DB. It is compared with the rest of the database to find the mathematically closest chunks(semantic searching using cosine similarity).

The tool then combines:

Your original prompt

Retrieved code chunks

The file you currently have open

Your cursor position

System prompts

Git history and conversation history(sometimes)

to build the final augmented prompt that gets sent to the LLM.

The model processes this and streams the response back into the tool’s backend before reaching your IDE.

There’s More Than One Code Spy

In parallel to everything above, a telemetry event fires to the tool’s analytics infrastructure separate from the inference pipeline and includes lots of metadata. (Telemetry simply refers to automatic data collection about how you use a system like what you click, type, accept, or change.)

If you edit a file based on the response:

The file watcher detects the change

Re-chunks the file

Re-embeds it

Updates the vector database

This creates a continuous, real-time feedback loop.

The Full Data Flow

So to summarize data flows through:

Your local codebase → file ingester → chunking engine → embedding model → vector database → LLM → response back in your IDE.

At no point, in most common implementations of this pipeline, is your data purely local. All this data transmission occurs while you were never asked if you wanted your code chunked, never informed what parts of your files became embeddings and no receipts showing what was transmitted from where or to whom.

Moreover, if you haven’t disabled training on your data, telemetry goes onto these LLM’s analytical infrastructures like Anthropic(AWS)(source), OpenAI(Microsoft Azure) and even potentially Datadog and MongoDB for logging. This stored data is then used to train and improve these company’s models.

Across these steps,

A Claude prompt flows through the servers of Anthropic, AWS and Google Cloud.

A GitHub Copilot prompt touches GitHub, Microsoft Azure, OpenAI and AWS/Anthropic or Google Cloud servers depending on cloud selection(source).

Cursor passes data through Cursor, AWS, Cloudflare, Microsoft Azure, Turbopuffer(Google Cloud), OpenAI, Anthropic, Google Vertex AI, Fireworks AI, Baseten, Xai and optionally Exa/Serp API when web search is involved(source).

Cursor’s own documentation states that it stores embeddings from user’s indexed codebases along with metadata like file names and hashes(source). GitHub Copilot ups the ante and explicitly mentions indexing entire codebases to build deeper understanding and fine-tuning model behavior.

Privacy Mode Isn’t Really.. Private

Coding models may not train on your private repositories at rest in privacy mode but while they are still interacting with it i.e via prompting code, accepting suggestions, rejecting suggestions, sending a thumbs up or thumbs down feedback- data is still collected and processed. Thus, user code may be in “privacy mode” at static storage but it is still being accessed by these companies during usage.

But it doesn’t stop:

Code from being processed

Embeddings from being created

Requests from being sent to cloud APIs

This is largely because these steps are required for the tools to function effectively.

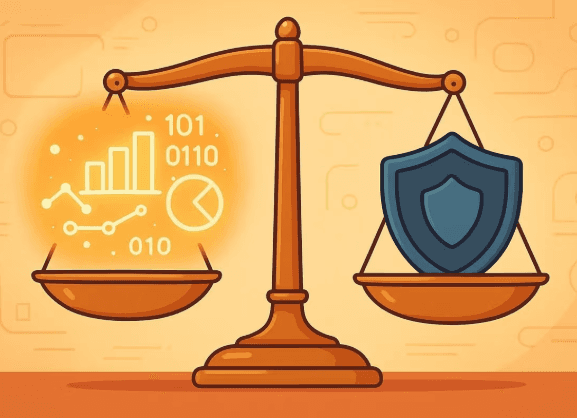

There are actually two separate data flows:

The service pipeline (to run the tool)

The training pipeline (to improve models)

Opting out only affects the second.

This isn’t just about privacy, AI-assisted code is also bringing about thousands of new security vulnerabilities every month. As of June 2025, AI-generated code was introducing over 10,000 new security findings per month, a 10× increase from December 2024 (source). What was meant to improve developer convenience is now, increasingly, expanding the potential attack surface(security-exploits).

Security Implications

This isn’t just about privacy.

AI-assisted code is also introducing new security risks (source).

As of June 2025, AI-generated code was associated with over 10,000 new security findings per month, roughly a 10× increase from late 2024(source).

What was meant to improve developer productivity is also expanding the potential attack surface in some cases.

So What Can We Actually Do?

So now that you and I know, our data can never truly be “private”, what do we do about it?

There is no perfect way out, only trade-offs because the same thing that makes these tools so helpful is the same thing that requires your code to leave your machine- context.

Some options include:

Option 1: Maximum Privacy

“Code like it’s 2015”

No AI assistants, no prompts and no risks, but also no autocomplete, debugging or speed.

But this is safety at the cost of practicality.

Option 2: Local-Only Models

So the next most efficient way out would be to use tools that run completely locally and avoiding third party APIs entirely but this comes at the cost of lesser efficient and heavier local models and a higher token cost.

Option 3: Practical Middle Ground

If option 2 too is too much of a sacrifice, the next best approach would be using Privacy Mode, opting out of training, using temporary chats and avoiding feedback buttons. On GitHub Copilot the privacy option is under Settings → Copilot → Policies.

This helps but only partially (as we discussed above).

The Bigger Shift

So no, the private repo is not simply a myth but it’s also not what we think it is anymore. A private repo today is not as private as a private repo from 2018.

The game has changed. The lines between proprietary code and AI training data have blurred significantly. If you're a developer today, you need to realize that privacy in the age of AI isn't about where your code lives on a server but also what happens the moment you interact with it.

The Uncomfortable Truth

All programming models today were trained on millions of lines of publicly available GitHub code (source). This didn’t start with copilot settings or privacy toggles but in fact, the entire AI industry was built first, consent came later. “Public code” became something like a confused misnomer because human programmers believed public meant open source for humans, but big tech interpreted “public” as an all-you-can-eat buffet for machines.

“Shutting the doors at this point won't change the fact that the AI industry is built on data gathered without asking for a strong indicator of enthusiastic consent. “(The Register)